Cutscenes explores—and blurs—the intersection of cinema and video games.

MUBI is now available on PlayStation 5: Click here to learn more.

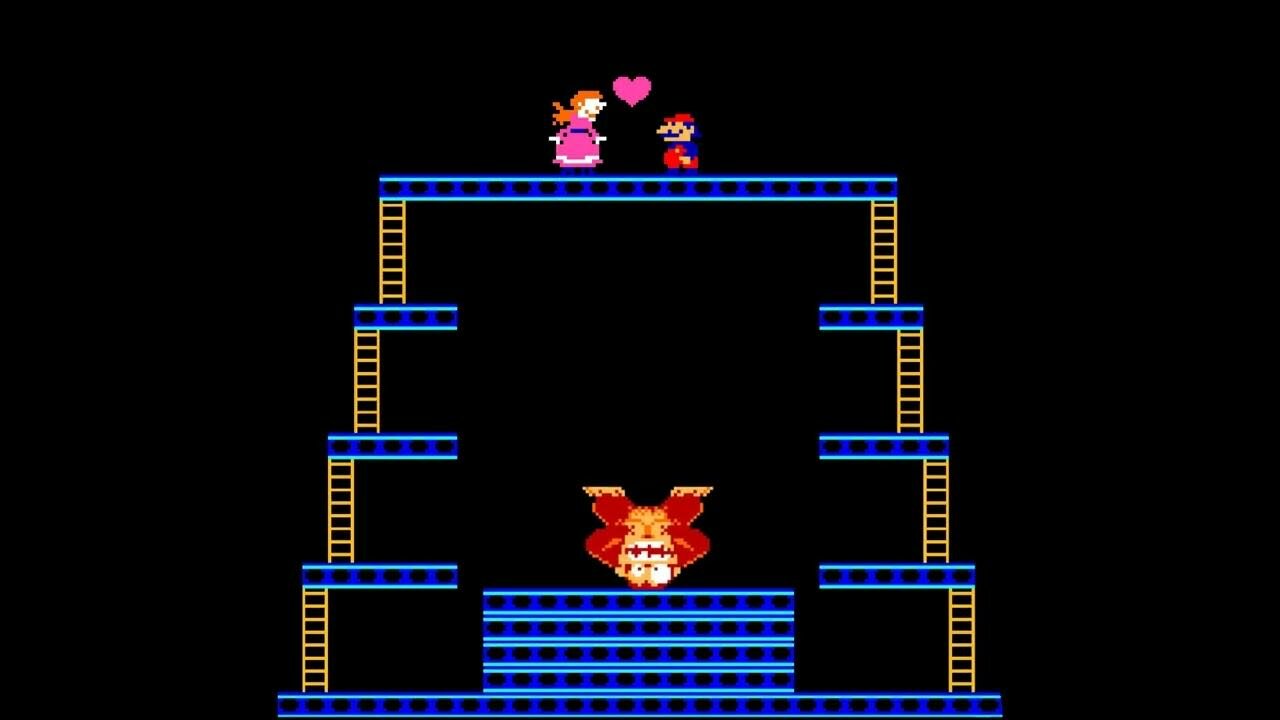

Donkey Kong (Nintendo, 1981).

The human body is supposed to be 70 percent water. I consider myself 70 percent film.

—Hideo Kojima

In her book How Games Move Us, an investigation of the emotional impact of gaming, Katherine Isbister suggests that playing a game is “more like actually running a race than watching a film or reading a short story about a race.”1 The player actively makes choices that affect their performance, feeling a “sense of mastery or failure” depending on their abilities, as well as a “sense of consequence and responsibility” for the choices they have made. Like the runner at the finish line, the player reaching the end of a level feels invested in the outcome because it resulted from their own actions. They made it happen.

Screenwriter and scholar Jasmina Kallay makes a similar argument in Gaming Film: “The main distinguishing factor between cinema and computer games is interactivity,” she proposes. “Films are watched while games are played.”2 This distinction, between the passive act of watching a film and the active one of playing a game, is generally the key differentiator between games and any other non-interactive artform. But it is something of an oversimplification. Many games have miniature movies packaged within them: “cutscenes,” defined by Merriam-Webster as “noninteractive video sequence[s]” between segments that depict “part of the game’s background or storyline.” Whether they are made using pre-rendered CG imagery or live-action footage (as in full-motion video, or FMV), or animated directly inside the playable world of the game in real time, cutscenes momentarily strip the player of their ability to interact, breaking the illusion of player control.

So how did an essentially active medium become reliant on the supposedly passive strictures of the moving image? Why ape the mechanics and conventions of a century-old medium instead of creating new means of storytelling befitting a technologically sophisticated, rapidly advancing form? And is it possible to convey a dramatic, authored story without taking control away from the player?

1980s: NECESSITY

Ninja Gaiden (Tecmo, 1988).

Sacha A. Howells, writing on the video game cutscene in Geoff King and Tanya Krzywinska’s ScreenPlay, identifies three principal types of cutscene that are used in game design.3 She describes the “come on,” the rendered or animated scene that plays when launching the game (or as a screensaver on an arcade cabinet), inviting the player into the game’s world. Then there is the “narrative segment,” which introduces the plot at the beginning of the game, or advances it in interstitial clips that contextualize the developing action. Lastly, there is the “reward” cutscene, a glossier sequence that follows some kind of significant accomplishment, such as a boss battle or a tricky platforming challenge. Its most dramatic manifestation is the “end movie,” which plays upon the completion of the game and ties up the story before the credits roll.

The cutscene in its most embryonic form can be traced back to the arcades of the 1980s. Space Invaders Deluxe (1979), the sequel to the classic fixed-screen shooter, included brief animations between levels, showing the alien pilot retreating from the final spaceship that the player had shot down. Pac Man (1980) did the same thing in slightly more sophisticated fashion, rewarding successful players with a momentary comic interlude showing the titular yellow protagonist chasing one of the ghost enemies across the screen. Donkey Kong (1981) progressed this idea by telling a rudimentary story through these interstitial pixel graphic animations, establishing the stakes of play by showing the game’s giant monkey villain scaling the game’s single screen with a woman under his arms, then developing the story with interludes detailing her rescue by the player-character who would later become known as Mario. As primitive as these sequences were, they marked a major step forward for the form: prior to Donkey Kong, backstory could only be signaled through gameplay or fleshed out via supplementary information written or illustrated on the cabinet itself.

The convention proliferated, with many games using simplistic animations to engage the player and advance the narrative between moments of active play. In the late ’80s, certain titles took the concept further. Ron Gilbert coined the descriptor “cutscene” when designing his first point-and-click adventure game, Maniac Mansion (1987): When triggered—by a timer rather than the player’s actions—the game would “cut” to another room in the manor for a narrative sequence featuring other characters. This made it possible to develop new plot threads outside of the player’s immediate purview. “I named them ‘cut-scenes,’” Gilbert reflected in a 2007 blog, “because they literally cut away from the action. Games before Maniac Mansion had non-interactive scenes that would play between levels or after a big event, but the ones in Maniac Mansion are different. They cut away.” A year later, Ninja Gaiden (1988) included some of the most extensive cutscenes created to date. Mixing comic-book-style panel animations with full-screen pixelated set pieces, the game’s twenty minutes of cutscenes delivered a grandeur and narrative depth not possible within the side-scrolling levels of the game.

As game technologies evolved rapidly through the 1990s, the cutscene started to be viewed as a necessity, allowing developers to craft visually impressive sequences that eclipsed what graphical systems were then able to render in-game. Cutscenes were not only prevalent, but were starting to resemble short films, running long enough that the player could set the controller down. If these increasingly elaborate sequences initially exposed the gap in technical possibilities between cutscenes and gameplay, they also threatened to take away the fundamental pleasure of gaming: the player’s ability to take action and influence outcomes, to run the race rather than just observe it play out.

1990s: NOVELTY

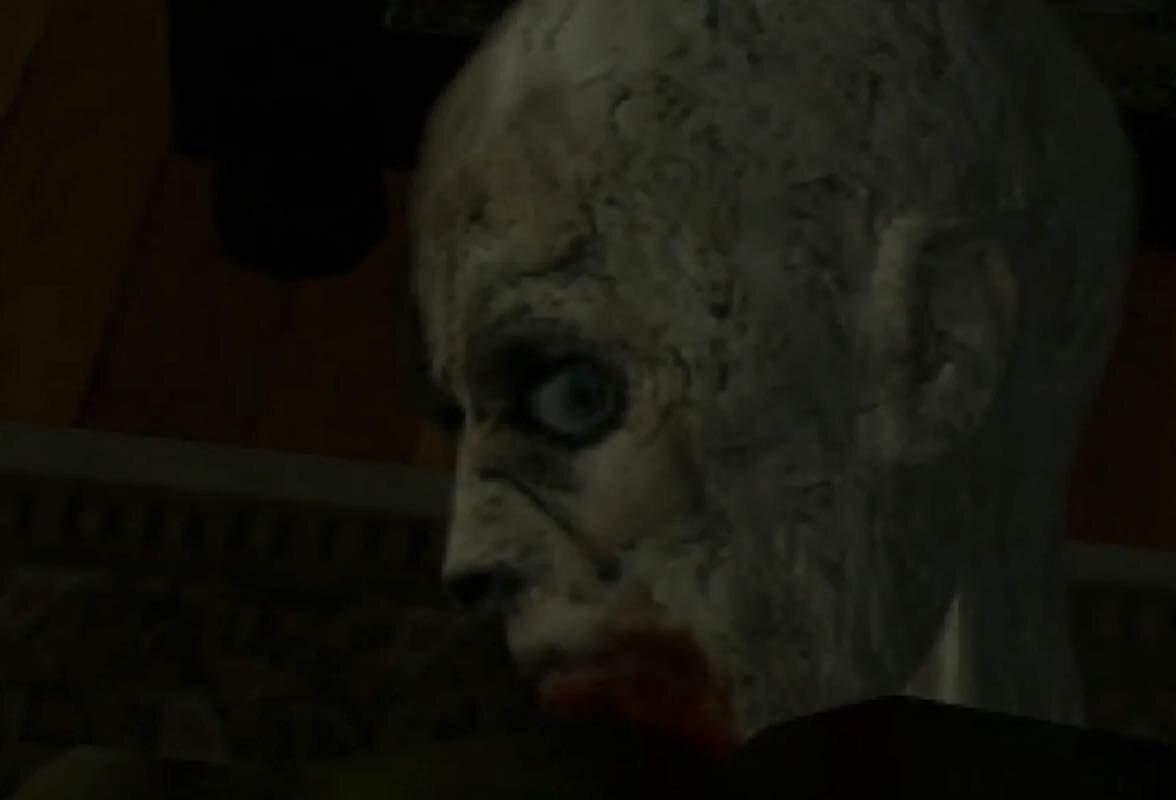

Resident Evil (Capcom, 1996).

In the 1990s, the look and feel of video games changed from two-dimensional, sprite-based graphics to experimental three-dimensional polygonal designs. Games like Tomb Raider (1996), Quake (1996), and Super Mario 64 (1996) properly introduced players to the experience of traversing 3D environments, opening up new possibilities in game design. The player’s new viewpoint in these games mirrored a cinematic camera. It either stayed fixed at predefined angles, followed and framed a player’s avatar automatically, or could be freely manipulated by the player using new analog sticks on controllers. Gameplay itself became more cinematic, beginning a push and pull of influence between the artforms; game designers would mimic camera movements, and filmmakers would emulate video game aesthetics. It’s hard to think about Lana and Lilly Wachowski’s The Matrix (1999), for instance, without factoring in the influence of ’90s PlayStation games; upon its release, the sisters tried to get Hideo Kojima to adapt their film into a game. And this was also the moment that gave rise to the Hollywood video game adaptation, with Rocky Morton and Annabel Jankel’s Super Mario Bros. (1993), Steven E. de Souza’s Street Fighter (1994), and Paul W. S. Anderson’s Mortal Kombat (1995) among the first to be released. Film adaptations could offer a level of realism beyond anything that game engines could yet produce, in the same way that cutscenes surpassed the fidelity of rudimentary in-game 3D.

At first, the cutscene seemed promising because it delivered something that the game itself could not. As Howells writes, “Just because a game was limited to a certain number of polygons and frames per second did not mean that cutscene movies had to be.”4 The graphics of the first Resident Evil (1996), for instance, were blocky and unconvincing, but the game’s opening FMV cutscene atmospherically introduced the game’s characters and setting using live actors and moody black-and-white cinematography, elevating the tone. The game’s director, Shinji Mikami, cited George Romero’s zombie films as an inspiration on his direction, also claiming that his disappointment with “some of the plot twists and action sequences” in Lucio Fulci’s Zombi 2 (1979) informed his own interactive take on the flesh-eating genre. Shots of newspaper headlines offer slivers of narrative context, while close-ups of eyes widened in terror and grizzly, slime-coated fangs set the schlocky, ’80s video-nasty-inspired mood. And the game itself was informed by the language of film editing too, constructed entirely through fixed camera angles that evoke footage captured by security cameras dotted around the game’s mansion setting. This visual grammar—as scholar Will Brooker has argued in an article for Cinema Journal—mirrored cinema’s, utilizing cuts that “linked” the game’s fixed shots “through the conventions of classical continuity editing,” which are triggered as the player moves from room to room.5 A memorable cutscene, initiated when the player reaches the room with the game’s first enemy, featured a creepy zombie turning toward the camera. Because the cutscene surpassed what could be rendered in-game, it offered a form of novelty, enabling a shortcut for developers still learning to construct three-dimensional gameworlds. These CG representations had a powerful subliminal influence on the player: they became both what the player remembered when they thought of Resident Evil and what they imagined as they played it, heightening the effect of the gameplay and amplifying the horror.

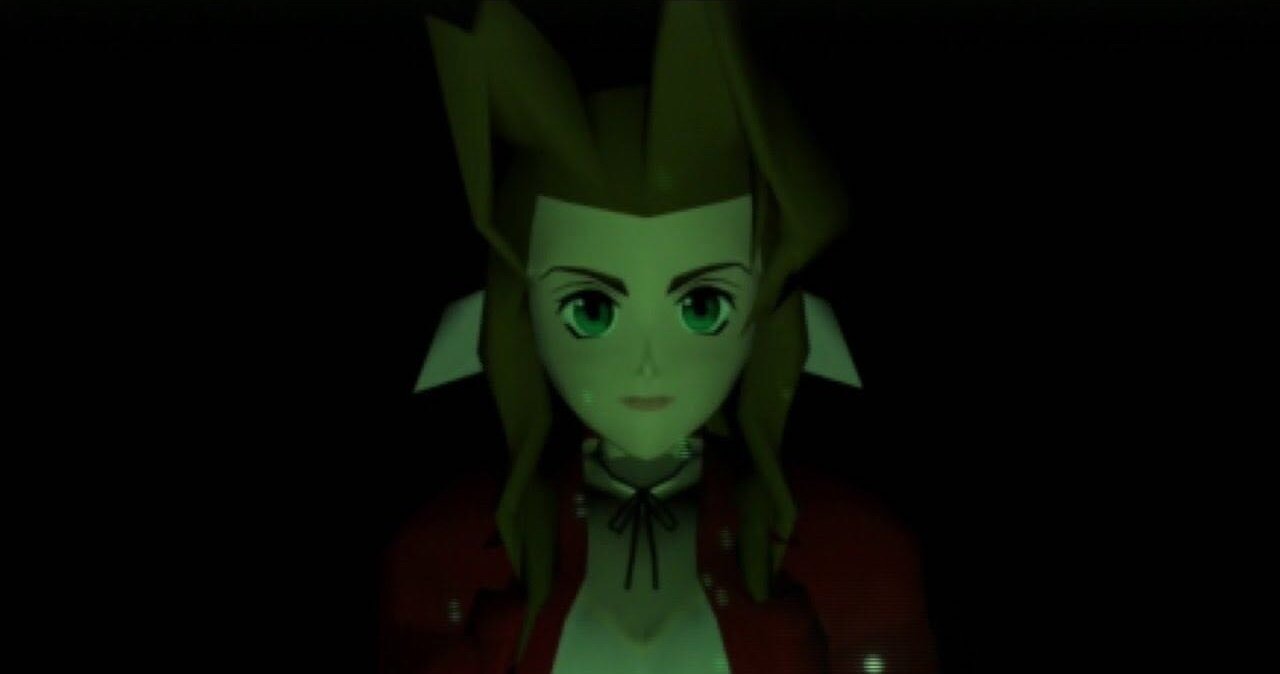

Final Fantasy VII (Square, 1997).

Final Fantasy VII (1997) took this idea of the cutscene as establishing shot a step further, launching with a beautifully rendered, two-minute-long sequence. A spinning shot of a starry sky lingers for dramatic effect, before a close-up of one of the game’s protagonists, Aeris, fades into view. Then the game’s camera pulls backwards, tracking skyward under archways and through towering structures like a modern-day drone shot to reveal an impressively detailed miniature sci-fi world seen from above. A title card appears, and the camera then surges back down toward earth, into the section of the city where the game’s first playable section will begin. At the moment of Final Fantasy VII’s release, three-dimensional game design was still protean and exploratory, but, the game’s pathbreaking cutscenes transcend mere narrative setup, suggesting the lavishly detailed, realistically rendered future worlds the game developers wished they could create. Accordingly, when playing the game today, this approach to the cutscene creates a strange dissonance. The characters seen in the more graphically enhanced cutscenes are almost unrecognizable compared to their low-poly, flat-featured, in-game counterparts, and while the 2D backgrounds of the game’s playable environments are artful, they feel limited in scope compared to the imagination-sparking arenas seen in the cutscenes, which tease future possibilities of game design.

Metal Gear Solid (1998), the first game in the popular series created by Hideo Kojima, perhaps gaming’s biggest cinephile, suggested an alternative path. With more than four hours of cutscenes within a game that takes around eleven hours to play, it was one of the first games tagged with the “interactive movie” descriptor, and a game that, due to the performance of David Hayter as protagonist Solid Snake, popularized voice acting in video games. It also pioneered the in-engine approach to the cutscene, wherein, to avoid the disjuncture of switching between the in-game graphics and glossier, CG-animated cutscene sequences, all cutscenes were animated inside of the game. Cut and framed like James Bond movies, these sequences flesh out the game’s charmingly ludicrous espionage story, playing out with the same rough and ready look of the game’s interactive spaces. Combined with the game’s “codec” calls—radio transmissions between characters that interrupt gameplay to bring in additional context or story detail—these sequences worked to establish a world, a sensibility, and an atmosphere that would not exist if the narrative were told purely through in-game events. Kojima famously used Lego building blocks to spec out the game’s 3D areas to see what the planned fixed camera views would look like, and the game itself was also markedly filmic, shaped by the perspective of its cinephile director and awash in stylistic nods to Hollywood films. Yet, despite all this, the moment in the game that feels most exciting is not an in-engine cutscene but instead something orchestrated within the game. During the boss battle with Psycho Mantis, an antagonist with psychic powers, Kojima collapses the fourth wall. To prove his psychic powers, Mantis scans the contents of the player’s memory card, commenting on what other PlayStation games the player has enjoyed, and activates the controller’s rumble functions as a power play. To break Mantis’s psychic hold over them, the player must plug their controller into a different port, reminding the player that the game at hand is not a passive journey. Unlike a cutscene, which imitates film and appropriates its vocabulary, this moment is hardware-specific: It could only happen when playing a game.

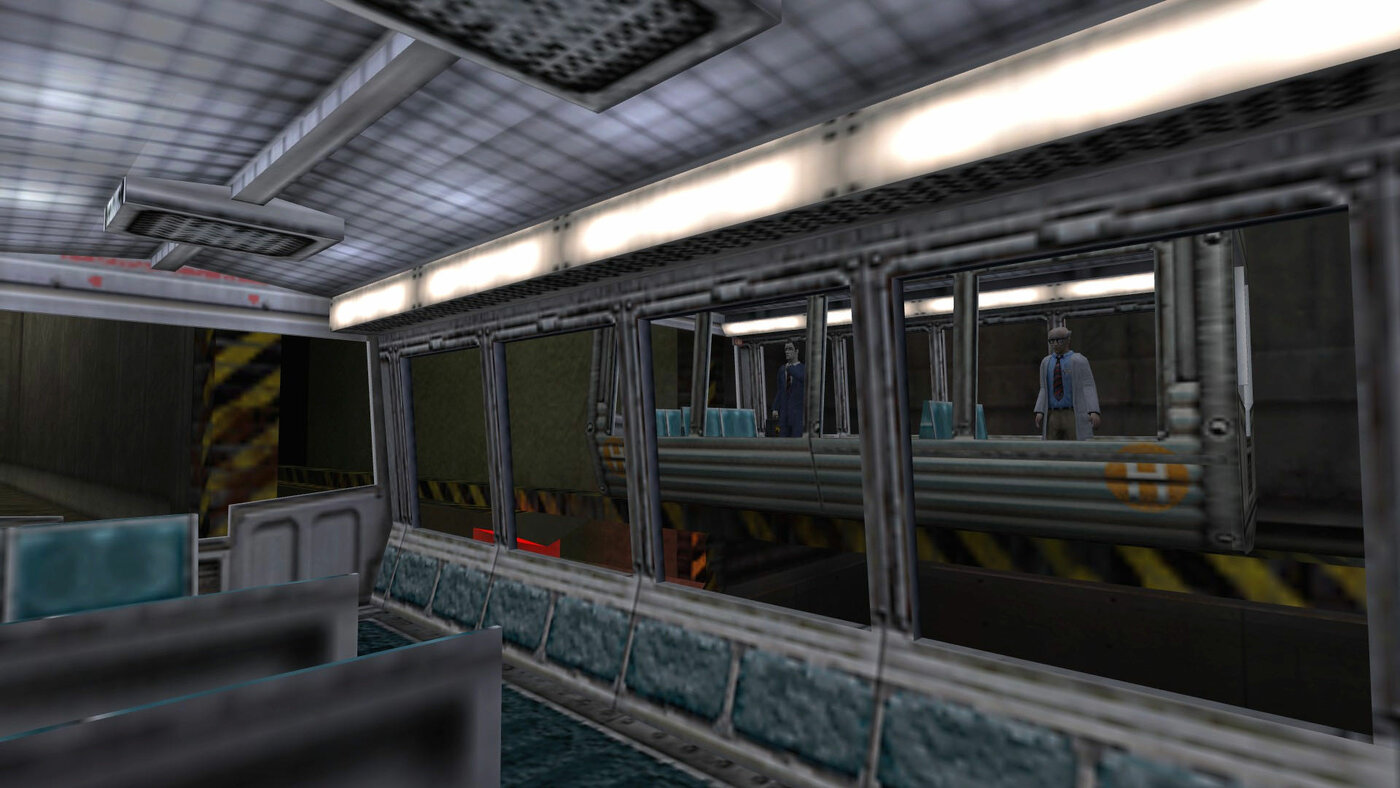

Half-Life (Valve Corporation, 1998).

The cutscene developed further over the 1990s, but some developers understood there were other ways of setting the scene, advancing a story, or rewarding the player without interrupting the game. One of the strongest examples is Half-Life (1998), a game which was originally planned to have cutscenes but ended up using none. Instead, the story is conveyed through a series of scripted sequences that unfold in real time in front of a player, as seen through the game’s first-person point of view. Marc Laidlaw, one of the game’s writers, reported that while this approach was initially a response to time constraints during development, it came with the added bonus of “not wak[ing]” the player “from the dream.” The power of this decision is clear in an extended introductory sequence in which the player travels by tram into the research facility in which the game is set. As the vehicle descends through the underground premises, it moves through different rooms and environments that trigger a number of scripted events, all of which introduce the game’s setting, story, objectives, and characters. As storytelling, this intro is immensely efficient, telegraphing to the player who they are, where they are, and why they should care, but, above all, it is a masterclass in immersion, and in speedily establishing atmosphere and tone. It is effectively a cutscene in which the player can move freely, but can’t press a button to skip.

Howells argues that when playing a game, the player learns “the rules of interacting with the game’s universe”: how to move their avatar, which objects can be manipulated or interacted with, or which actions will result in the avatar’s death. But “when a cut scene starts he or she is abruptly wrenched out of this established world and thrust into a new one, where the role of the active participant is abandoned.”6 The player relinquishes control of both their avatar and the game-camera they manipulate, experiencing the dissonance of witnessing “the character who presumably ‘is’ him or her doing things he or she never instructed [them to do].” This feels wrong. As Howells explains, “Frankenstein’s monster does not just come to life; it takes over the Doctor’s body.”7Half-Life manages to avoid this: From the very beginning, it establishes the game’s world and aesthetics, the rules of engagement, and the player’s role, all while maintaining the illusion of freedom within a tightly authored experience. There are no cutscenes to sever the player from the avatar they inhabit, meaning the player’s sense of immersion remains intact.

2000s: NUANCE

Death Stranding (Kojima Productions, 2019).

In the years that followed, the graphical fidelity of video games increased dramatically, meaning that the visual difference between cutscene and gameplay all but vanished. Without drawing attention to themselves, cutscenes could blend fluidly into gameplay as in Bioshock’s (2007) fourth-wall breaking first-person interactions, or be embedded as interstitial dialogue exchanges (Mass Effect, 2007), or expository short films (The Witcher 3, 2015) created seamlessly inside the game engine. And with this, the cutscene came to feel like an easy option to advance the narrative rather than the intuitive one, a shortcut to a cinematic sensibility rather than a tool conducive to narrative. The subversive approaches to cutscenes in Half-Life and Metal Gear Solid were not the norm; most game designers rendered long-winded, badly written and acted cutscenes, directed with only a limited understanding of competent video editing, let alone the kind of command over film history that it would take to produce something more unexpected or interesting. Instead, the cutscene became the default mode of delivery for perfunctory exposition or bad puns.

Criticisms of the cutscene are numerous, particularly from filmmakers. Guillermo del Toro skips them. “I'm not watching a movie, f*** you,” he said in 2011. “I want a game.” The same year, Danny Bilson, a filmmaker and then VP of Creative Production at video game publisher THQ, infamously remarked that creating a cutscene “is the failure state,” calling them “the last resort of game storytelling.” One of the most vociferous arguments made against the cutscene came from Keith Stuart, a video game critic for The Guardian, who, in making his case for the retirement of “the whole convention,” argued in 2024 that, much like how early cinema borrowed techniques from theater before developing its own language, video games now need to depart from cinema and find their own systems for conveying story. He makes the case for what he terms a “post-cinematic theory of mainstream game narrative”: storytelling devices or techniques that are active, interactive, and founded in how games actually work rather than made to mimic how cinema functions.

Elden Ring (FromSoftware, 2022).

In recent years, a path forward has emerged through “environmental storytelling,” a type of nuanced narrative design wherein player actions generate moments of ambient micronarrative. Media scholar Henry Jenkins outlined the idea in a 2004 article, noting four ways that environmental storytelling “creates the preconditions for an immersive narrative experience.”8 For him, spatial stories can “evoke pre-existing narrative associations; they can provide a staging ground where narrative events are enacted; they may embed narrative information within their mise-en-scene; or they provide resources for emergent narratives.” Players move around a space, and rather than triggering an expository moving image sequence, they encounter small, controllable situations that tell a player something about the world they are inhabiting or induce feelings that have a more affective valence. Crucially, the choice to engage with these details is the player’s—those who seek sensorial narrative information can dwell on these moments, those that favor action can skip past them at will. In an immersive environment, “story is something the player walks into rather than watches, a space of discovery rather than performance, a playground not a theatre," Stuart argues. “It should be widely—and wildly—interpretative, and maybe even entirely optional or subliminal.” When done well, this feels like something only games can do.

Death Stranding (2019)—the first game created by Hideo Kojima’s own studio following his 2015 breakaway from Metal Gear Solid publisher Konami—features many cutscenes, most of them laborious, overlong, and largely nonsensical, but the game’s most atmospheric moments occur sporadically during active gameplay, in breakout incidents of haptic excitement and flow. In the game, the player acts as a sort of futuristic delivery worker, shepherding precariously stacked parcels through beautifully rendered, post-apocalyptic natural environments. The player guides their avatar across precarious terrain, climbing crumbling rock faces, struggling through blizzard-ridden snowscapes, and barreling over verdant hillsides, and upbeat pop songs, sparsely utilized, break in as environmentally triggered cues intended to raise the player’s spirits and inspire them to reach the next peak. The player’s struggles with the gameplay combine with the sound and visuals to heighten the drama of the experience, instilling the feeling of an edge-of-your-seat set piece, without ever wrenching away control. The player is made to feel like the architect of a good movie, rather than the viewer of a bad one.

Elden Ring (2022) similarly creates a living, breathing cinematic world without being reliant on cutscenes. The player is free to traverse a vast medieval setting on foot or on horseback; their understanding of this world depends on the path they choose to take through it, impacting the incidents they stumble upon. Approaching a bridge, the player may see a group of enemy characters leading a mammoth, shackled beast across it. Enter a rampart and the player may see the last remaining human soldiers battling the monstrous force that has been attacking it. If the player comes closer, these enemies will fight them, but if they remain out of sight, they can spy on the group. Instead of merely witnessing narratives play out, the player actively shapes them through interventions with live scenarios. The game environment comes to function as a container of seemingly infinite microcosmic events, outcomes, moments, and stories—like a universe in a petri dish. The closest comparison to this sort of storytelling is perhaps immersive theater; the audience explores a controlled environment, where different actors and scenes might be sequestered in different rooms. A spectator’s experience of the story is determined by the actions that they’re able to glimpse, but they are always aware of the scope of what they cannot see, too, knowing full well that other events are occurring elsewhere. However, due to the power of video game graphics and the imagination of designers and artists, as well as the game’s sizable production budget, Elden Ring’s theater is more immersive, and of a significantly greater scale.

The Last of Us (Naughty Dog, 2013).

In an influential article from 2000, the designer and illustrator Don Carson wrote a guide instructing designers—of theme parks and of video games—how to tell a story through the experience of traveling through an environment rather than through text, video, or other more prescriptive means. He explains that his audience “will have to make decisions based on their relationship to the virtual world,” and that “their experience is going to be a ‘spatial’ one,” as well as nonlinear. In the years since he wrote the piece, his prognostications have proven prescient. In The Last of Us (2013)—later turned into a television series—the most emotional moment is not one of the game’s many cutscenes, nor something that could successfully be translated to the serial drama form. It is dependent not just on interactivity, but on the player’s investment in the character they have embodied for so long—their sense that they are the body that they control. A horror game set in a world stricken by a zombifying parasite that spreads via mushroom spores, The Last of Us follows Ellie, a young woman who is inexplicably immune to the virus that has destroyed almost all of the rest of the living world. Near the end, after so much violence and depravity, the player, who embodies Joel, the conflicted carer figure who accompanies Ellie on her journey, stumbles upon an area of the game world that, somehow, has not been subjected to the devastation they’ve encountered elsewhere. Instead of zombies laying waste to the land, giraffes roam the parkland that stands bright, green, and beautiful before them. The game’s action-oriented gameplay subsides for a moment, and the player, standing beside the surrogate daughter who has accompanied them for so many hours prior, can choose how long to stand and stare at the graceful creatures that roam before them. As they watch, Joel and Ellie share a tender moment and exchange some terse but potent words. It’s remarkably impactful, partly because it’s so unexpected, but also because it occurs as an organic moment of pause without any disruption of the game’s flow. The player witnesses something breathtaking in its simple beauty, and they retain power over how they wish to sit with this moment, in some way co-authoring the scene.

In his guide, Carson stakes out three tenets of good environmental storytelling: “Take us somewhere we could never go. Let us be someone we could never be. Let us do things we could never do.” The cutscene, with its ability to render things otherwise impossible, might gesture toward such a transporting dream, but without the essential element of interactivity, it misses the fundamental aspect of letting the player be and do.

- Katherine Isbister, How Games Move Us: Emotion by Design (MIT Press, 2017), 2. ↩

- Jasmina Kallay, Gaming Film: How Games are Reshaping Contemporary Cinema (Palgrave Macmillan, 2013), 1. ↩

- Sacha H. Howells, “Videogames as remediated animation,” in Screenplay: cinema/videogames/interfaces (Wallflower Press, 2002), ed. Geoff King and Tanya Krzywinska, 114. ↩

- Howells, 112. ↩

- Will Brooker, “Camera-Eye, CG-Eye: Videogames and the ‘Cinematic’," Cinema Journal Vol. 48, No. 3 (Spring, 2009): 122-128, https://www-jstor-org.lonlib.idm.oclc.org/stable/pdf/20484483. ↩

- Howells, 116. ↩

- Howells, 117. ↩

- Henry Jenkins, “Game Design as Narrative Architecture”, in First Person: New Media as Story, Performance, and Game (MIT Press, 2004), ed. Noah Wardrip-Fruin and Pat Harrigan,118–130. ↩